Interaction Design Guidelines for Home Automation

In the last years, home automation became a reality as a

result of an increased availability of different commercial solutions. Some examples of these options can be X10,

UPB, Insteon and Z-Wave. Also, the fact that costs decrease day by day making

this type technology widely accessible to almost every home.

The idea of smart home, which is controlled by themselves,

understands the context and performs actions, has provided new research fields

and opportunities. In spite of that, there are still controversial opinions

toward how can smart homes improve the life and care of the elderly or of

people with different disabilities.

Among the different disabilities, people with impaired motor

abilities can take advantages of eye tracking methods to control their homes.

This kind of tracking strategies can exploit this limited ability to build a

communication channel for interaction and as a result opening new

possibilities for computer-based communication and control solutions. Home

automation can be the link between software and tangible objects, enabling

people with motor disabilities to effectively and physically communicate with

their environment.

The European Network of Excellence, COGAIN (Communication by

Gaze Interaction), studied in 2004 the advantages and drawbacks of using

eye-based systems in the home automation field. Their results identified that there weren't advanced functionalities for controlling appliances and neither interoperability between them. Also, the execution of actions was an issue for

the existing eye-tracking mechanisms.

A few years later, members of COGAIN proposed 21 guidelines

to promote safety and accessibility in eye tracking methods for environmental

control applications.

One of the first applications of this set of guidelines was

the DOGeye project, a multimodal eye-based application for home management and

control, based on tracking and home control.

COGAIN Guidelines

The COGAIN project was launched in September of 2004 with

the goal of integrating vanguard expertise on gaze-based interface technologies

for the benefit of users with disabilities. The project was quite successful

as it gathered more than 100 researchers from different companies and research groups; all related with eye tracking integration with computers and in assistive

technologies.

In 2007, the members of project published a “Draft

Recommendations for Gaze Based Environmental Control”. This document proposed a

set of guidelines for developing domotic control interfaces based on eye

interaction. These guidelines were developed from real uses cases where people

with impairments perform daily activities in domotic environments.

The document divided the guidelines into four categories:

- Control Application Safety: guidelines that determine the behaviour of the application under critical situations such as emergencies or alarms.

- Input Methods for Control Application: guidelines about the input methods that the application should support.

- Control Application Signification Features: guidelines impacting the management of commands and events within the house.

- Control Application Usability: guidelines concerning the graphical user interface and the interaction patterns of the control applications.

Each guideline has a priority level compliant with the W3C style:

- Priority 1: indicates that must be implemented by the applications as it is related with safety and basic features.

- Priority 2: indicates that should be implemented by the applications.

There are three important issues that control interfaces

must deal with:

- Asynchronous Control Sources: the control interface needs to continuously update the status of the house (icons, menus, labels, etc.) according to the home situation.

- Time Sensitive Behaviour: in critical conditions, like an emergency, eye control may become unreliable. In these cases, the control interface must offer simple and clear options, easy to select and must be able to take the safest action in case that the user cannot answer on time. This kind of behaviours offer automated actions triggered by rules. For instance, if it is raining, close the windows. In any case, the user should be able to cancel the automated action and also be aware that it is been executed.

- Structural and Functional Views: control interfaces can organize the information into structural or functional logic. The first one displays the information in a physical fashion, similar to the real disposition of elements in the house. The second option suits best with actions that doesn’t have a “localized” representation, like turning the anti-theft system on. Good interfaces should find a balance between these two views.

The

a summary of the guidelines follows:

Guideline

|

Content

|

Priority Level

|

1.1

|

Provide a fast, easy to understand and

multi-modal alarm notification.

|

1

|

1.2

|

Provide the user only few clear options to

handle alarm events.

|

2

|

1.3

|

Provide a default safety action to overcome

an alarm event.

|

1

|

1.4

|

Provide a confirmation request for critical

& possibly dangerous operations.

|

1

|

1.5

|

Provide a STOP Functionality that

interrupts any operation.

|

1

|

2.1

|

Provide a connection with the COGAIN

ETU-Driver.

|

1

|

2.2

|

Support several input methods.

|

2

|

2.3

|

Provide re-configurable layouts.

|

2

|

2.4

|

Support more input methods at the same time.

|

2

|

2.5

|

Manage the loss of input control by

providing automated default actions.

|

2

|

3.1

|

Respond to environment control events and

commands at the right time.

|

1

|

3.2

|

Manage events with different time critical

priority.

|

1

|

3.3

|

Execute commands with different priority.

|

1

|

3.4

|

Provide feedback when automated operations

and commands are executing.

|

2

|

3.5

|

Manage Scenarios.

|

2

|

3.6

|

Communicate the current status of any

device and appliance.

|

2

|

4.1

|

Provide a clear visualization of what is

happening in the house.

|

1

|

4.2

|

Provide a graceful and intelligible

interface.

|

2

|

4.3

|

Provide a visualization of status and

location of the house devices.

|

2

|

4.4

|

Use colors, icons and text to highlight a

change of status.

|

2

|

4.5

|

Provide an easy-to-learn selection method.

|

2

|

DOGeye

DOGeye

was planned as a solution to fill the blank of a home control application in

compliance with COGAIN guidelines, through multi-modal interaction with special

attention on eye tracking technologies.

|

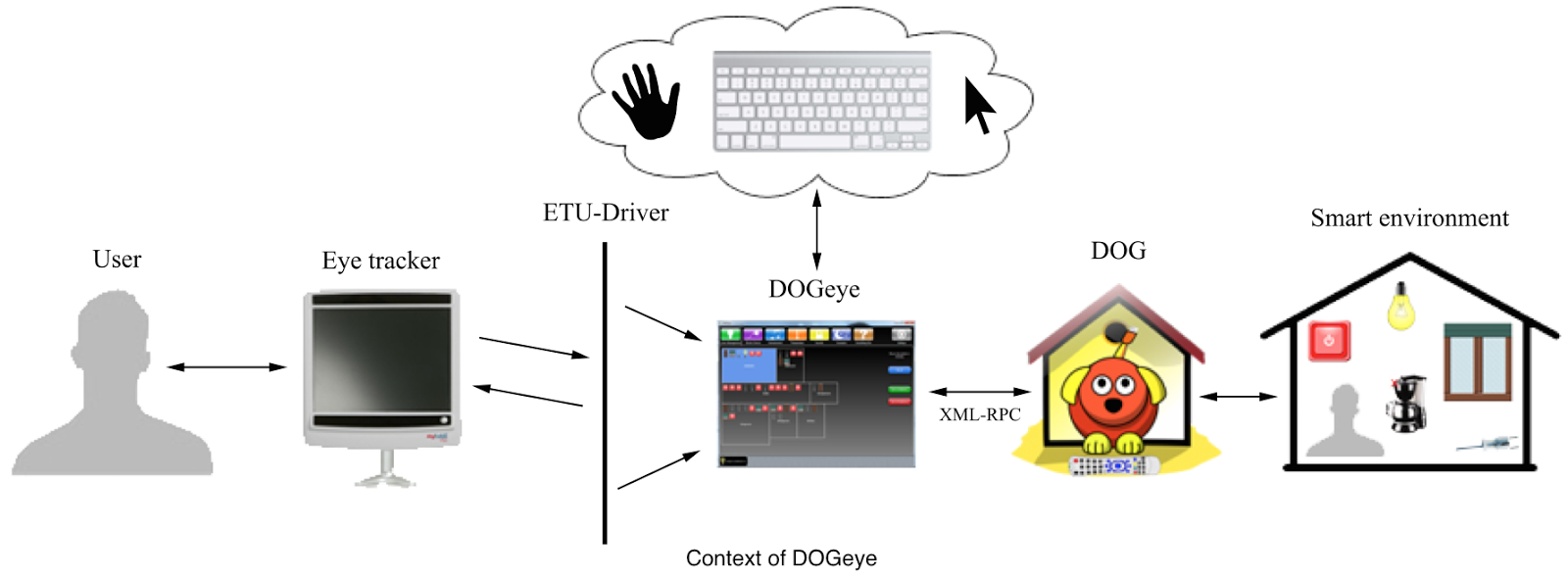

DOGeye communicates with Domotic OSGi Gateway (Dog)

through a XML- RPC connection. This connection allows information exchange and

controlling other devices inside the smart environment. This home control

application can be controlled by gaze, by communicating with the eye tracker

through a universal driver (ETU-Driver) or by external elements such as

keyboard, mouse or touch screen devices.

Dog, an ontology-based domotic gateway, provides

the interaction with smart homes. This gateway is able to integrate and

abstract functionalities of different domotic systems, offering a uniform

high-level access to the home technologies.

The interface was designed through an iterative process, starting from a

scratch layout and iteration by iteration refining the design until the final

design was reached compliance with COGAIN guidelines. A capture and video of the interface

follows.

There are 8 main elements in the interface disposed in a top

menu bar. Each element, when activated, displays a tab with the main details of

that element.

- Home Management contains the basic devices present in a house. These are the devices that belong to the mains electricity wiring such as shutters, doors, lamps, etc.

- Electric Device contains the electrical appliances not belonging to the entertainment system, such as a coffee maker.

- Entertainment contains the devices for entertainment, such as media centers and TVs.

- Temperature allows handling the heating and cooling system of the house.

- Security contains the alarm systems, anti-theft systems, etc.

- Scenarios handles the set of activities and rules defined for groups of devices.

- Everything Else contains devices not directly falling in the previous tabs, e.g., a weather station.

- Settings shows controls for starting, stopping and configuring the ETU-Driver.

Scenario

If you wanted to turn on the lamp in the

kitchen and warm coffee, you should follow the following steps.

- First, look briefly at “Home Management” to activate this tab. The map of the kitchen will appear rapidly.

- Then stare at the “Enter the room” tab for a time. After doing that, you will see the list of all devices present in that room.

- To select the lamp you would look at it and then stare at the “On” button presented in the command area.

- Finally, to warm the coffee look at “Electric Device” tab and use the same procedure.

Usability Testing of DOGEye

Previous to the testing, a short introduction to the study

and the collection of demographic data was done. Afterwards, a static DOGeye

screenshot was shown to the participants to gather a first impression on the

interface.

The usability testing was divided into three main phases: warm-up, task execution and test conclusion.

The warm-up phase was executed after calibrating the eye

tracker with the participant’s eye. Next, the participant played a simple game

for getting used to the eye tracking interaction. This phase was considered

finished after achieving a pre-established number of points in the game.

In the task phase each participant was told to complete a

set of nine tasks, one at a time. For

two of them, the participants were asked to use the think-aloud protocol, to

verify the actions performed.

In last phase, test conclusion, participants were given a questionnaire

and asked to rate DOGeye in general, and to rate their agreement with the same

sentences proposed earlier, just after seeing the screenshot of DOGeye.

The duration of the entire experiment was dependent on eye

tracker calibration problems and on how quickly participants answered the

questions, but it ranged between 20 and 30 minutes.

For testing this application, 8 participants

(5 female and 3 male, aged 21 to 45) used DOGeye in a controlled environment.

These participants performed nine tasks each, with Dog simulating the behaviour of a realistic home. The quality of the eye movement of each participant was

not taken into account for the study, since eye tracking is only the input

modality.

The tasks were the following ones:

- Task 1: Turn on the lamp in the living room.

- Task 2: Plug the microwave oven in the kitchen.

- Task 3: Find a dimmer lamp, turn it on and set its luminosity to 10%.

- Task 4: Cancel the alarm triggered by the smoke detector.

- Task 5: Turn on all the lamps in the lobby.

- Task 6: If the heating system in the bedroom and in the kitchen is off, turn it on.

- Task 7: Set to 21 degree the heating system for the entire house.

- Task 8: Send a general alarm to draw attention in the house.

- Task 9: Read the smoke detector status and expand the video of the room to full screen.

R esult of the

Usability Testing

The result of the usability testing was positive

and provided useful insights on the application explicitly designed for eye

tracking support.

A short summary of the findings follows:

- Some of the users suggested refactor the top menu. Like splitting the “Home Management” into two different tabs, “Lighting System” and “Doors and Windows”. Also, “Everything Else” tab should be removed because the participants could not understand the meaning.

- None of the participants used the “multiple selection” feature. Each of them used “single selection”, therefore the feature was removed from the application (except from temperature since it is the only viable modality for multiple selection in this tab).

- The users appreciated the vocal feedback and the tab divisions.

- The presence of a camera in the “Security” tab was something that they found marvellous.

The study concluded with very good feedback from participants, pointing out some errors in the design and motivating the developers to progress in the field and continue enhancing the product. Also, it is the kick off for helping and improving life quality for people with motor impairments from a common starting point.

Reference:

- De Russis, Luigi, Emiliano Castellina, Fulvio Corno, and Darío Bonino. "DOGeye: Controlling Your Home with Eye Interaction." PORTO Publications Open Repository TOrino. Politecnico Di Torino, 16 June 2011. Web. 10 May 2014.

Comments